How to export your Databricks configuration using Desired State Configuration

Learn how to export your existing Databricks instance configuration

You've just joined a new company and your first task is to set up a development environment that mirrors production. The Databricks workspace has a lot of configuration, ranging from dozens of users, groups, service principals, and secret scopes.

All configured over months of careful work without automation. How do you capture all of that? Are you going to click through the UI? Parse API responses with custom scripts? Or even worse, ask your fresh new colleagues what they configured six months ago?

Whilst my heart always goes to "automation first", I've encountered the same. But what if I told you there's now a command that captures your Databricks configuration as code?

That's precisely what the new Export functionality in DatabricksDsc makes possible. And I wish I'd had it developed back then.

In this blog post, you will learn all about it.

Prerequisites

If you want to try it out for yourself, you're going to need:

- An Azure Databricks instance.

DatabricksDscPowerShell module v0.6.0 or above.- Microsoft DSC v3.2.0-preview.9 or above.

The export challenge in PowerShell DSC

For years, PowerShell Desired State Configuration (DSC) has been developed, and it has been the go-to solution for Windows administrators managing infrastructure as code. You define the desired state, and DSC matches that configuration.

But there's always been a gap.

You were able to retrieve a current instance using the Get operation. But that's for a single instance. What if you wanted multiple instances, say all users from your Databricks instance?

Traditional PSDSC never provided a native export capability. If you wanted to document an existing configuration, you would need to create a custom script to parse the output and construct your DSC configuration document.

Microsoft's DSC changes this.

Microsoft DSC and the export command

Microsoft's DSC team introduced a proper export command. This wasn't just an incremental improvement. It was a fundamental shift in how we think about configuration management, driven by a partnership with the WinGet team.

WinGet faced similar challenges in the application configuration landscape. WinGet needed a way to export current application states, and, together with the DSC team, they built a generalized export capability that benefited not only WinGet but also the entire configuration management ecosystem.

What does it have to do with the DatabricksDsc module? This means every resource can implement a static Export() method that discovers existing resources and returns them as ready-made objects. You instantly get documentation and reproducibility in a single command. Let's take a look.

Using DSC's engine to export resources

The most straightforward way to export resources is using the dsc.exe command-line tool. This approach gives you native YAML or JSON output that integrates directly with DSC configuration workflows.

Before we can export resources, we need to identify which resources support exporting. To do so, you can run the following command:

dsc resource list |

ConvertFrom-Json |

Where-Object {$_.type match 'DatabricksDsc*' -and $_.capabilities -contains @('export')}The output:

type : DatabricksDsc/DatabricksAccountMetastoreAssignment

kind : resource

version : 0.6.0

capabilities : {get, test, set, export}

path : C:\Program Files\PowerShell\Modules\DatabricksDsc\0.6.0\DatabricksDsc.psm1

description : This module contains class-based DSC resources for Databricks and Unity Catalog with a focus on Azure.

directory : C:\Program Files\PowerShell\Modules\DatabricksDsc\0.6.0

implementedAs : 1

author :

properties : {WorkspaceUrl, AccessToken, Reasons, AccountsUrl…}

requireAdapter : Microsoft.DSC/PowerShell

target_resource :

manifest :

type : DatabricksDsc/DatabricksAccountWorkspacePermissionAssignment

kind : resource

version : 0.6.0

capabilities : {get, test, set, export}

path : C:\Program Files\PowerShell\Modules\DatabricksDsc\0.6.0\DatabricksDsc.psm1

description : This module contains class-based DSC resources for Databricks and Unity Catalog with a focus on Azure.

directory : C:\Program Files\PowerShell\Modules\DatabricksDsc\0.6.0

implementedAs : 1

author :

properties : {WorkspaceUrl, AccessToken, Reasons, AccountsUrl…}

requireAdapter : Microsoft.DSC/PowerShell

target_resource :

manifest :

# truncatedNow, imagine you want to export all current users in your instance. You can run the following command:

$workspaceUrl = '<workspaceUrl>' # Replace with your workspace

$accessToken = '<accessToken>'

$jsonInput = @{workspaceUrl = $workspaceUrl; accessToken = $accessToken} | ConvertTo-Json

dsc resource export --resource DatabricksDsc/DatabricksUser --input $jsonInput

Exporting to a file

If you want to export the results to a file so you can apply them to another instance, you can redirect to a file:

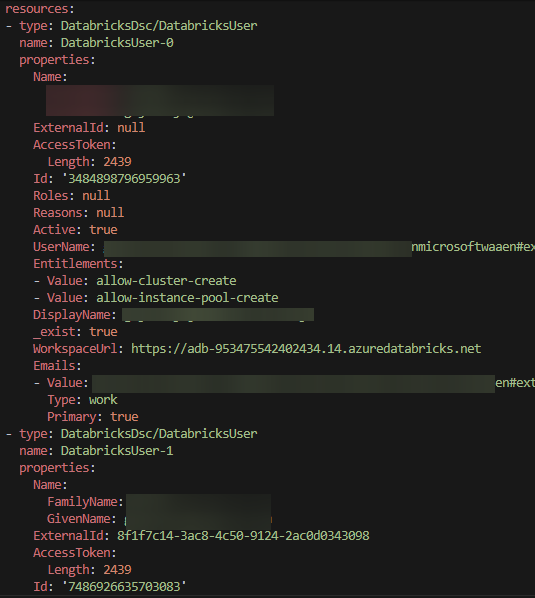

dsc resource export --resource DatabricksDsc/DatabricksUser --input $jsonInput --output-format yaml > databricks-users.dsc.config.yamlOf course, there's a small gotcha here. Not all properties are configurable or are returned differently. For example, it doesn't make sense to set the Id property, and the AccessToken requires to be parsed as plaintext (not the Length property you see in the image above). For the rest, after you've scratched up the document with these properties, you can apply it to a different instance using dsc resource set.

Exporting multiple resource types

You can export different resource types and eventually combine them into one big document:

# Export groups

dsc resource export --resource DatabricksDsc/DatabricksGroup --input $jsonInput --output-format YAML > databricks-groups.dsc.yaml

# Export secret scopes

dsc resource export --resource DatabricksDsc/DatabricksSecretScope --input $jsonInput --output-format YAML > databricks-scopes.dsc.yaml

# Export secrets (metadata only - values are not returned by API)

dsc resource export --resource DatabricksDsc/DatabricksSecret --input $jsonInput --output-format YAML > databricks-secrets.dsc.yaml

All three commands create separate documents. If you want to combine them into one big document, you can now.

--output-format flag.How export works in DatabricksDsc

Under the hood, DatabricksDsc implements export functionality across its resources. Each resource class includes a static Export() method that:

- Connects to your Databricks instance (or account).

- Queries the relevant APIs to discover all existing resources.

- Returns an array of configured resource instances.

It's also possible to use a filter. For example, if you only want to return a subset of active users, you can add the Active property:

# Rebuild JSON input

$jsonInput = @{workspaceUrl = $workspaceUrl; accessToken = $accessToken; Active = $true} | ConvertTo-Json

dsc resource export --resource DatabricksDsc/DatabricksUser --input $jsonInputThe static Export() method will use a filtered properties list to search for active users and return the result to DSC's engine.

Conclusion

If you were on a tight deadline to spin up a fresh Databricks instance, you now have the power to do it easily. And the most beautiful part about it is that it is completely reproducible and repeatable.

As the module continues to grow, more resources will gain the Export capability. But even today, you can document users, groups, service principals, secret scopes, and secrets (to a limited extent). This is true 9 out of 10 times for the foundation of any Databricks instance.

For more information, visit the DatabricksDsc GitHub repository and check out the examples in the documentation.